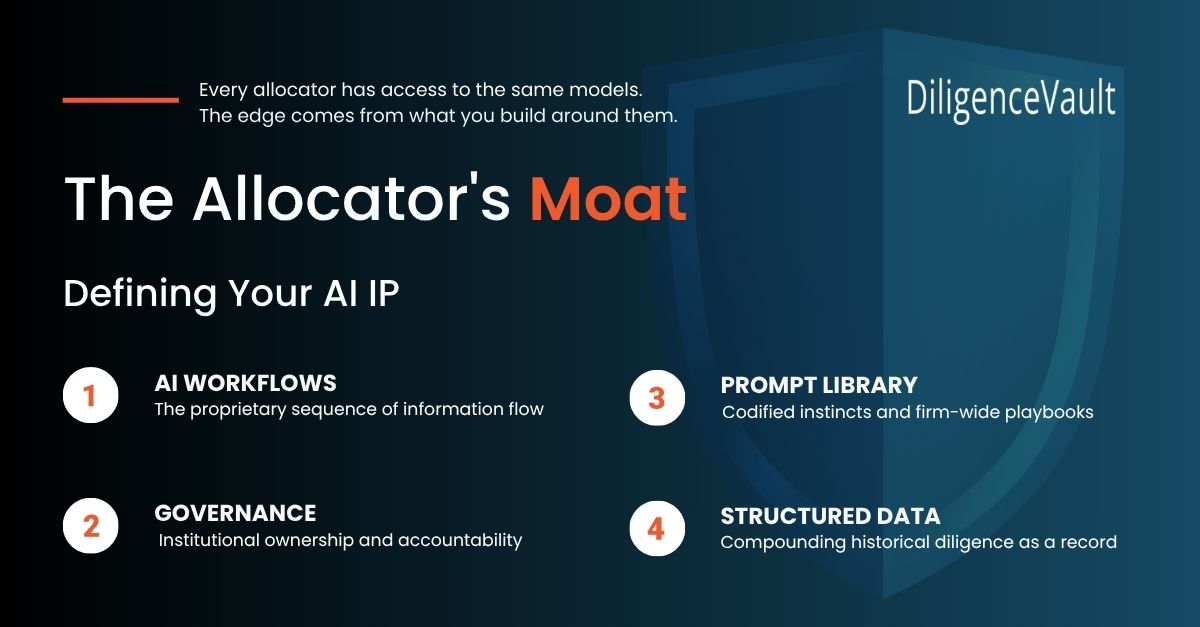

For allocators thinking about AI as a long-term competitive advantage.

Today, every allocator has access to the same AI models.

GPT. Claude. Gemini.

They are quickly becoming infrastructure, the equivalent of cloud storage or Excel. Useful and powerful, but not a source of lasting advantage on their own.

Which raises a more interesting question.

If everyone has access to the same intelligence, where does the edge come from?

Right now, most teams are using AI in fairly tactical ways, summarizing documents, drafting memos, automating pieces of diligence, and saving analyst hours.

That is all good progress, but it is not a moat.

The real differentiation will come from something deeper, how firms encode their judgment into the systems they build around AI. The gap between a generic AI output and a genuinely useful investment insight is not really about the model, it is about the institutional knowledge wrapped around it.

Here is where that shows up:

1. Your prompts eventually become your AI playbook

A single prompt does not matter much.

But over time, the prompts a team develops start to reflect how they actually think. What questions do they ask first? What risks do they probe? What patterns have they learned to recognize across hundreds of manager relationships?

One team might ask an AI to summarize a manager. Another pushes it to test key-person risk against historical failure patterns, or to surface operational inconsistencies across multiple diligence cycles, or to flag when a GP’s narrative has shifted between vintages.

Those prompts gradually become more valuable than they appear. They become a codified version of the firm’s instincts, a digital playbook that captures not just what the team knows, but how it thinks.

The firms building real IP here are not just collecting prompts. They are building prompt libraries, systematized, tested, and maintained like any other institutional process. Tuned to their mandate. Refined after every diligence cycle, and owned by the firm, not scattered across individual analyst laptops.

2. Process matters more than the model in the long run

Another overlooked source of advantage is simply how information flows through the diligence process.

Allocators rarely make decisions based on a single document or a single analysis. Insights emerge from the combination of many things, a DDQ from this year, an operational report from several cycles ago, investment committee notes, meeting summaries, and reference conversations.

What matters is how those pieces connect.

When AI is wired into that flow, comparing documents across time, cross-checking disclosures, flagging when a manager’s story has evolved, it begins to feel less like a tool and more like an analyst who never forgets anything and never gets tired.

That architecture is usually invisible from the outside. It lives in the sequence of steps, the document sets combined, and the validation checkpoints before output reaches the IC. It is also incredibly difficult to replicate, precisely because it is not a product anyone can buy, but a process a firm has to build.

3. Data compounds quietly into your system of record

If there is one asset most allocators underestimate, it is their historical diligence data.

AI models are capable of sophisticated reasoning, but the quality of their output depends heavily on the richness of the context provided. A model working with ten years of structured manager data, track records, ODD findings, engagement history, and red flags surfaced and resolved, will produce materially better insights than one working from a single uploaded document.

Better data leads to better insights.

Better insights lead to better decisions.

Better decisions generate better future data.

That feedback loop, once established, compounds quietly in the background. Teams that started organizing and structuring their historical diligence data two years ago are already ahead, and the gap will widen.

This is also the most durable form of AI IP. Prompt libraries can be rebuilt. Workflows can be copied. But a decade of structured institutional data, maintained with discipline, and fed into models that understand your investment framework, is nearly impossible to replicate quickly.

4. Someone has to own the moat

This is the gap most teams have yet to address.

Prompt libraries degrade if no one maintains them. Workflows drift if no one governs them. Data quality erodes if no one owns it. The allocator firms that will compound their AI advantage are those that treat these assets the way they treat any other institutional process, with clear ownership, regular review, and accountability.

That does not mean a dedicated AI team. It might mean a single senior person who owns the prompt library and reviews it quarterly. A simple log of what changed and why. A standing agenda item in the quarterly meeting. Governance does not need to be heavy, it just needs to exist.

The firms that stumble here are not the ones that tried AI and failed. They are the ones who tried AI, saw early results, and then let the process quietly atrophy because no one was accountable for maintaining it.

5. The people who build these systems are part of the moat

There is a talent dimension to this that rarely gets discussed.

Analysts who learn to build, refine, and govern AI processes are developing a skill set that compounds just as the systems do. They understand where the model breaks down. They know which prompts produce reliable outputs and which produce plausible-sounding nonsense. They have developed an intuition for when to trust AI and when to push back.

That judgment, knowing how to work with AI well, is not something that can be acquired overnight. It is built through iteration. The moat is not just the system; it is the people who know how to improve it.

6. The firms that improve fastest will pull away

The biggest gains from AI do not come from the first deployment, but from what happens afterward.

The first version of a prompt is rarely great. The first workflow usually misses something. But teams that pay attention to where the model misunderstood context, where a prompt was too vague, and where a missing document left a gap in the analysis improve quickly.

Every correction sharpens the system. After a series of disciplined iterations, the difference between a firm that has been refining its AI processes and one that just started will be significant and visible.

The advantage is not static, it is a rate of improvement. And rates of improvement, compounded, create gaps that are very hard to close.

7. Some things should never be delegated to a machine

Not every part of manager selection should be handed to AI, and the most important design choice in building these systems is understanding exactly where to draw that line.

There are still aspects of the allocator’s craft that depend on something AI cannot replicate. Sensing whether a GP’s conviction is real or performed. Reading the cultural dynamics inside a firm through an on-site visit. Understanding why a senior partner just left, when the official explanation does not quite add up.

AI can surface the patterns that make those conversations more targeted. It can flag the inconsistencies that tell you which questions to ask. But the judgment call at the end, the one that actually matters, still belongs to the investor.

The firms that use AI best are not the ones trying to automate the most. They are the ones who are most precise about what stays human.

Where is this heading

Three years from now, the winning allocators will not simply be the ones that adopted AI. Most will have done that.

The firms that stand out will be the ones that treated AI adoption as an opportunity to encode their institutional knowledge into something durable, a prompt library that reflects how they think, a workflow that reflects how they work, a data asset that reflects what they have learned.

The models themselves will keep improving. New capabilities will arrive. The competitive landscape will shift.

But the firms that started building their AI IP deliberately, owning it, governing it, refining it, will carry something into that future that their competitors will not.

Not a better model. A better version of themselves.